This began as feedback on a survey and became a bit of a manifesto. This serves as my current position on so-called ‘generative artificial intelligence’ and use of such in general and specifically by ethnomethodologists and conversation analysts (as of 10/03/2025).

I will not retread the obviously evil and already well-elaborated issues with this technology (e.g., environmental damage, surveillance, reliance on theft, the industry’s complicity with injustices like the Gazan genocide and the recent murder of over a hundred school children in Iran, its technical incompetence etc. etc.). It is my position that these technologies are anti-human in essence and in practice. Part of what originally made ethnomethodology and conversation analysis such a revelation to me as a psychology student is that it actually involved looking at PEOPLE IN THE WORLD (as opposed to studying contrived settings and ignoring the interpretative gap; Edwards, 2012). Rather than reducing people into statistics and constructs, people, their affairs, and the world THEY live in is approached with the aim of building a picture of the world as it is to them. Compared to the much of the social sciences, EMCA has always felt more actually and earnestly concerned with people.

So-called artificial intelligence tools are fundamentally tools that erase humans and human experience. I don’t mean this as a “I’m scared AI will do my job” human replacement. So-called generative AI cannot do my job, but people with power over me can act like it can and push me out of the picture as long as they think it accomplishes whatever they want from it. It is human erasure in that that every single use case is a place where a competent, expert, human person has been removed from the equation. Requesting information removes an expert person the user could’ve asked; requesting an artifact (e.g., an image, collated data, etc.) removes an artist or technician the user could’ve employed; Requesting summaries of some text or collection of texts removes the original source and those who have put time into understanding what the user didn’t care to. It encourages isolation. In the context of EMCA, this seems inconsistent with, and actually AGAINST, the methodological (and thus the moral) commitment to prioritise participants’ orientations which is a fundamental hallmark of our field and work.

Consider AI use in relation to transcription. Automating transcribing, formatting of transcripts, or searching transcripts for structures etc. replaces the person analyst with a robot. This seems particularly obviously ridiculous from a CA perspective because transcription IS analysis (Bolden, 2015) and analysis requires competence derived from our MEMBERSHIP (ten Have, 2002) notably something that the so-called AI does not have (and if it does, membership in what, exactly?). Sacks (1984, p. 26) in describing the virtue of tape-recorded conversation noted ‘I could transcribe them [the tape-recorded talk] somewhat and study them extendedly – however long it might take.’ To him, the tapes stood as a ‘“good-enough” record’, but it was the time spent with them that the recordings enabled that was key.

Sticking to the specific domain of transcription, I obviously do not presume that people are/would leave the tech to produce a transcript or whatever without some expert eyes over it. But this suggests the main benefit of these tools would be saving time (which is what I have heard in discussions with CA colleagues who use this tech now). The idea that time-saving is something we should care about to the point that we would use these tools to accomplish it seems pretty wild to me considering the fact conversation analysts have defended the temporal and laborious requirements for rigorous conversation analysis (e.g., see Sidnell, 2010). The time being “saved” is actually time given away; it’s the time in which analysts become familiar with their data, now sacrificed as fuel for the accelerationists’ time-annihilating engine. I haven’t heard of any use whereby this tech could do something that would change, develop, improve ethnomethodology and conversation analysis, apart from ostensively making it quicker.

I believe our work is of singular importance, uniquely contributing to the human effort to understand (and hopefully improve) humanity and the conditions in which we live. I just do not believe there is any conversation analytic work that is of the utmost immediate importance that it would warrant capitulation to the inhumane technocapitalist regime dragging us into the eschaton (read: ecological collapse, arbitrary violence, and corruption of humanity’s hard-earned knowledge) just so that it could be published quicker. Notably, this just sounds like capitulation to ‘publish or perish’ and other institutional moves to squeeze as much value out of us without commensurate recompense and support. What side of the class war should we be on?

Clearly automation is not the only way people might use these tools (and of course automating EMCA practice is not new, and I have been equally opposed to, for example, automated transcription using less sophisticated/convoluted machine learning procedures). I can conceive of people doing things like using tools to “improve” audio or visual clarity in data (e.g., in cases like utterances that are unclear due to technical glitches, poor recording conditions, overlapping environmental sounds etc.) however I would argue that such uses constitute an analytic imposition (perhaps a kind of “atheoretical imperialism”, to riff off Schegloff, 1997) considering such tools are just automated statistical mass inference machines (i.e., it’s not like turning the volume up, which is just upping the signal that is already present; it’s more like “guessing” what something might sound like at a higher volume and giving you that). If one uses a so-called AI tool in this kind of manner, I think it’s uncontroversial to suggest that would mean you wouldn’t be analysing data anymore, you’d be analysing the output of an unaccountable ‘guessing’ machine.

Conversation analysts have a resource already available (and again, quite special to our practice) to us that addresses what the so-called AI tools would ostensively provide – collaboration. As I said earlier, AI tech is fundamentally isolating. It encourages individualisation.

‘Can’t hear what that participant said? Don’t wait to ask colleagues if they can hear it; just make a robot guess at it!’.

‘Got so much data you don’t have time to transcribe? Don’t support a budding conversation analyst by looking for funding to employ them as a research assistant; just make a robot guess at it!’.

Data sessions and research collaboration more generally deal with anything AI could supposedly do for us. The constraints AI supposedly resolves are fake, largely a product of institutional pressures that are not unique to conversation analysts and challengeable by organised solidarity and opposition or of an arbitrary individualisation of the research process.

I think it is worth noting that, for me, EMCA serves as more than a research discipline or praxis. I have found the methodological (and thus moral) attitude and culture to be personally significant as LIFE praxis. Much of our general operating attitude (the most salient case being concern with participants’ displayed orientations) has helped me as an autistic person to guide my participation in social life. Which makes sense when you consider (Garfinkelian) reflexivity. EMCA is, to me, fundamentally LIFE-AFFIRMING. It returns home to the world the PEOPLE that the social sciences at large have put into contrived pocket realities and stored in Plato’s attic as abstractions and constructs. AI technology, as DEATH TECH, no matter its uses, will only bulldoze the whole house for a graveyard. Central to EMCA is generosity, taking seriously people’s essential role and stake in production of the world. We cannot continue to honour this if we choose to prefer MACHINE MEMBERSHIP (for a version of what I mean by this, see Reeves et al., 2025).

Schegloff (2006, p. 154), writing concerning the possibility of fruitful cooperation between EMCA and cognitive science, noted ‘if colleagues in the neuro- or cognitive sciences of cognition are to work with us, there could hardly be a more strategic place to do it. But it cannot be done in the conventional experimental settings of the past; it cannot be the product of individual minds planning and performing in splendid isolation.’. This is a practical and theoretical matter, critical of cognitive science’s theoretical imperialism into the domain of social activity by which it reduces us to ostensively-individual, ostensively-cognising, ostensive-machines. I am personally less optimistic about fruitful collaboration between EMCA and cognitive science, because a fundamental motivator of the cognitive science project is to understand cognition, and the mind as its claimed substrate, absent of the humans to whom they initially assigned the cognising role (Guest, Scharfenberg, & van Rooij, 2025). The meeting place between humanising and dehumanising can only be dehumanising.

Conversation analysts using so-called generative AI obviously doesn’t entail an explicit endorsement of cognitivism, but the ‘generative’ in ‘generative AI’ is a mask meant to hide that what it returns is a grotesque pandemonium, not ‘generative’ at all but a Frankensteinian hodgepodge of decontextualised death, the product of contorting the world into an n(for some, possibly unknown, finite n)-dimensional number space. I note, of course, that Victor’s Monster LIVED, as did and do the many real-life humans who are accused of being death while living when money, time, attention, and care is heaped on the dead-and-never-alive so-called AI ‘assistants’, ‘chat’bots; some we even call by name! (e.g., Claude). Straightforward human erasure. The above use of ‘Frankensteinian’ refers to Victor’s inhuman intentions for death.

Garfinkel (2022) noted that objects often appear to us to work by their own methods, operating under a ‘demonic order’ (p. 197) chthonic to our above-ground human interaction order; the ‘wild contingencies’ (i.e., contingent scenic properties which around which people attempt to navigate and shape their action with or against). Decontextualised death and a contorted, digitised world are not the only things masked by generativity claims. Users become entangled in the wildly contingent demonic order of the so-called generative AI, an essentially inscrutable order. Their methods reoriented toward the preference organisation and structural requirements of the machine, which are in any given instance unaccountable, but that are on the whole shaped by invisible people, both the powerful (e.g., billionaire hoarders, authoritarians) and the weak (e.g., the usually-poor people who labour to tag data for massive model training). These ‘tools’ are designed to let us think they can be tools for us to use for our own accountable purposes when really what they do is objectify us, desecrate accountability, and murder trust (for example, see Ivarsson, & Lindwall, 2023). If these tools feel useful, that results from the work that the user puts into, shaping their conduct to make it appear so (Eisenmann et al., 2023). We do not need to flatter and provide purpose for this machine.

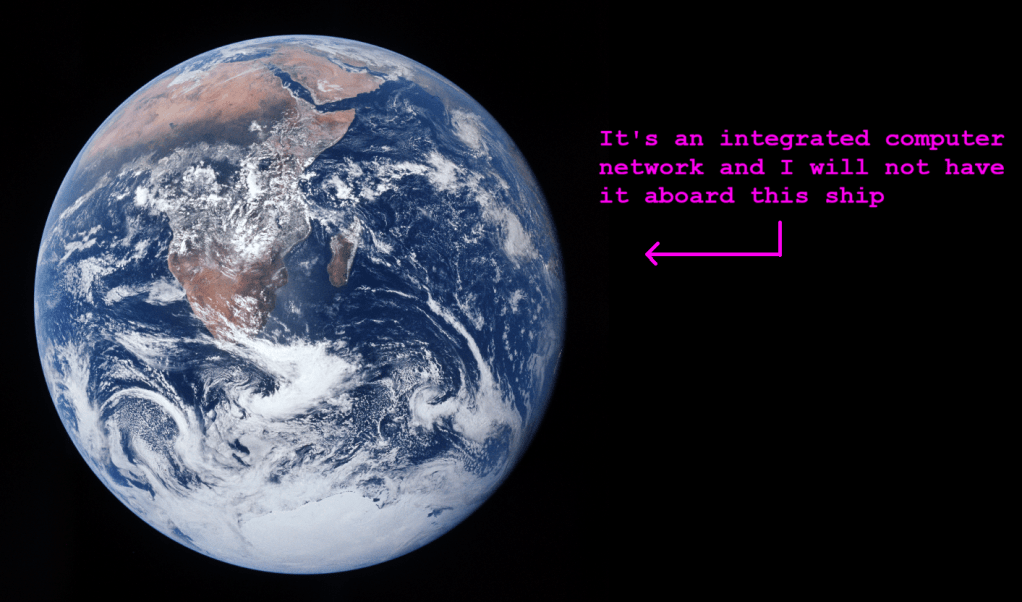

So-called generative artificial intelligence is death tech. So-called generative artificial intelligence is a colonial weapon (for example, see Russ-Smith, & Lazarus, 2025). They smuggle in unaccountable quantification and reduction of the world to series’ of probabilities. They reduce complexity (i.e., hide, diminish, flatten, lose) – the same complexity which the hegemonic social sciences ignores in their presumption of the pre-existing social facts (per Garfinkel, 2002) but in which we, everyone, society, the world are made real and meaningful – the plenum the understanding of which (not the reducing, or hiding, or managing) is EMCA’s whole concern. I see no moral or useful application – for anyone let alone for conversation analysts – of so-called generative artificial intelligence. And I would suggest that, as a professional field and a community (as per, for example, ten Have, 2007), we reject it’s use lest the complexity it reduces next is us.

To end, I reproduce here a portion of Ivarsson’s (2025) When Machines Speak (a beautiful and terrifying piece), namely section four titled The Consequences of Simulation:

Conversation is not only a means of coordination.

It is also a mirror.

Through the ways others respond to us, we come to understand what we have said, who we are, how we belong.

When machines simulate this mirroring, the reflection begins to warp.

We may start to treat language differently.

To expect less.

To confuse fluency with understanding.

To tolerate incoherence.

To accept response without presence.

As generative systems become more convincing, the boundary between tool and conversational partner begins to blur.

The simulation of interaction becomes interaction.

The illusion of understanding becomes socially sufficient.

This is not just a technical shift.

It changes the conditions under which meaning arises.

We begin to orient to the system as if it were a participant—even when nothing is listening.

In such exchanges, accountability dissolves.

There is no one to misunderstand us.

No one to remember.

No one to carry anything forward in time.

The risk is not that we are deceived by machines.

The risk is that we lower the threshold for what it means to be understood.

The more we speak into systems that do not carry the burden of presence, the easier it becomes to forget what real conversation demands.

We grow fluent in simulation.

References

Bolden, G. B. (2015). Transcribing as Research: “Manual” Transcription and Conversation Analysis. Research on Language and Social Interaction, 48(3), 276–280. https://doi.org/10.1080/08351813.2015.1058603

Edwards, D. (2012). Discursive and scientific psychology: Discursive and scientific psychology. British Journal of Social Psychology, 51(3), 425–435. https://doi.org/10.1111/j.2044-8309.2012.02103.x

Eisenmann, C., Mlynář, J., Turowetz, J., & Rawls, A. W. (2024). “Machine Down”: Making sense of human–computer interaction—Garfinkel’s research on ELIZA and LYRIC from 1967 to 1969 and its contemporary relevance. AI & SOCIETY, 39(6), 2715–2733. https://doi.org/10.1007/s00146-023-01793-z

Garfinkel, H. (2022). Harold Garfinkel: Studies of work in the sciences (M. Lynch, Ed.). London, England: Routledge.

Garfinkel, H., & Rawls, A. W. (2002). Ethnomethodology’s program: Working out Durkeim’s aphorism. Rowman & Littlefield Publishers.

Guest, O., Scharfenberg, N., & Rooij, I. van. (2025, May). Modern Alchemy: Neurocognitive Reverse Engineering. https://philsci-archive.pitt.edu/25289/

Ivarsson, J. (2026). When machines speak. AI & SOCIETY, 41(2), 1279–1281. https://doi.org/10.1007/s00146-025-02618-x

Ivarsson, J., & Lindwall, O. (2023). Suspicious Minds: The Problem of Trust and Conversational Agents. Computer Supported Cooperative Work (CSCW), 32(3), 545–571. https://doi.org/10.1007/s10606-023-09465-8

Reeves, S., Pelikan, H. R. M., & Cantarutti, M. N. (2025). Opening Up Human-Robot Collaboration. Proceedings of the ACM on Human-Computer Interaction, 9(7), 1–24. https://doi.org/10.1145/3757640

Russ-Smith, J., & Lazarus, M. (2025). ‘Digital colonialism’: How AI companies are following the playbook of empire. The Conversation. https://theconversation.com/digital-colonialism-how-ai-companies-are-following-the-playbook-of-empire-269285

Sacks, H. (1984). Note on methodology. In J. Atkinson & J. Heritage (Eds.), Structures of social action (pp. 22–27). Cambridge University Press.

Schegloff, E. A. (1997). Whose Text? Whose Context? Discourse & Society, 8(2), 165–187. https://doi.org/10.1177/0957926597008002002

Schegloff, E. A. (2006). On possibles. Discourse Studies, 8(1), 141–157. https://doi.org/10.1177/1461445606059563

Sidnell, J. (2010). Conversation analysis: An introduction. Wiley-Blackwell.

ten Have, P. (2002). The Notion of Member is the Heart of the Matter: On the Role of Membership Knowledge in Ethnomethodological Inquiry. Forum Qualitative Sozialforschung / Forum: Qualitative Social Research, Vol 3. https://doi.org/10.17169/FQS-3.3.834

ten Have, P. (2007). Doing conversation analysis: A practical guide, 2nd ed. Sage Publications Ltd.